Data Quality for ksqlDB

Data Quality for Streaming Data

Lightup's ksqlDB connector enables organizations to monitor Data Quality for streaming data, critical for organizations leveraging platforms like Kafka for high-speed, high-volume, real-time transactions.

Data Quality for Streaming Data

Without proactive Data Quality monitoring, data issues can cascade quickly in event-driven streaming platforms like Kafka. For example, if incorrect data and invalid schemas go undetected, downstream applications and services in that ecosystem can fail or run at suboptimal performance.

Since Kafka has a partitioned log model architecture to support vast amounts of streaming data, it lacks the structured tables and schemas found in relational databases. This architectural difference means traditional Data Quality checks deployed in databases won’t work in Kafka.

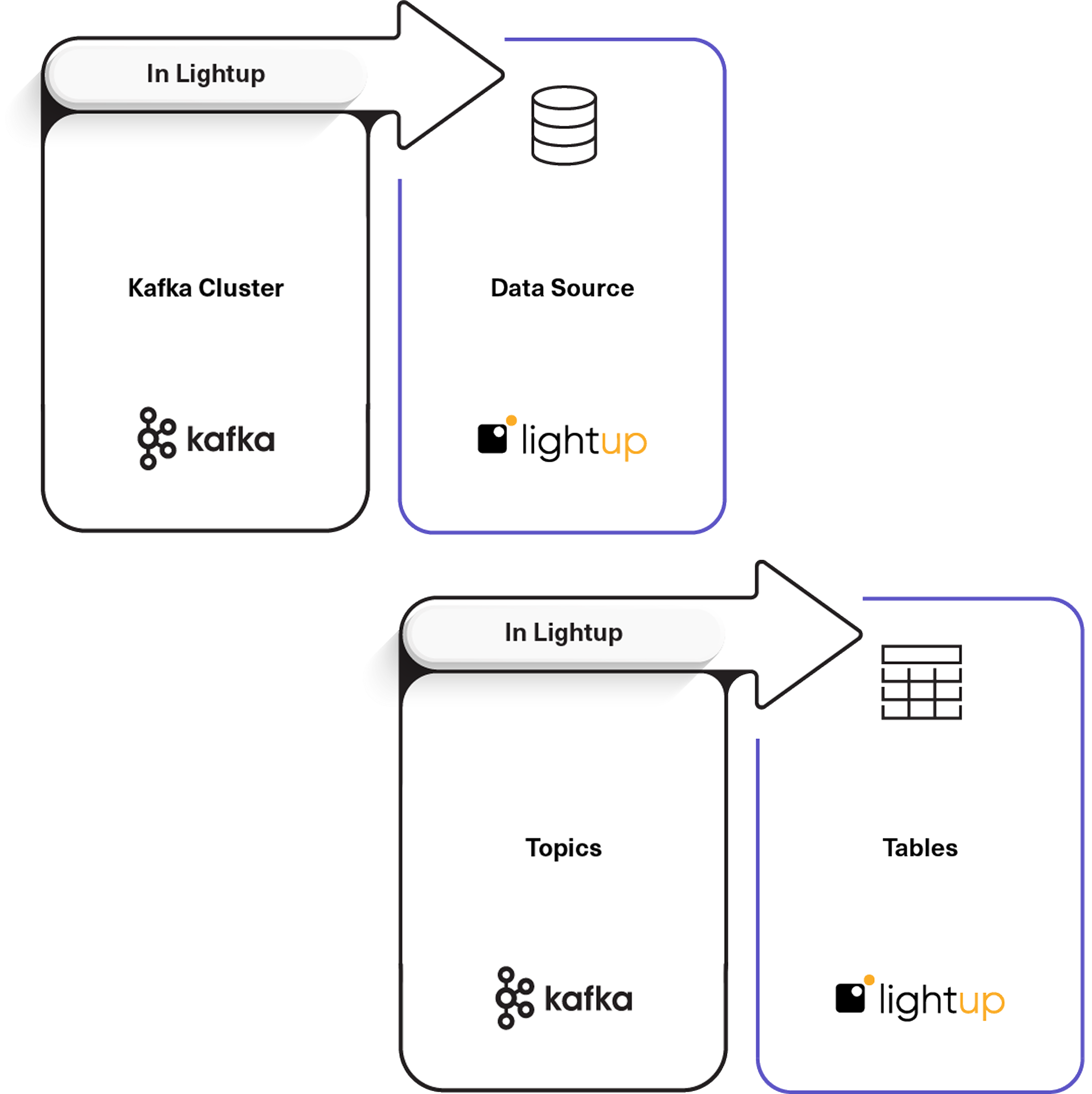

That’s where the Lightup connector for ksqlDB comes in, offering a way to read streaming data from all “Topics” stored in Kafka clusters by:

- Treating a Kafka cluster as a data source.

- Converting ksqlDB “Topics” to tables in Lightup.

- Creating a “Default” schema automatically for Kafka.

Lightup Connector for ksqlDB

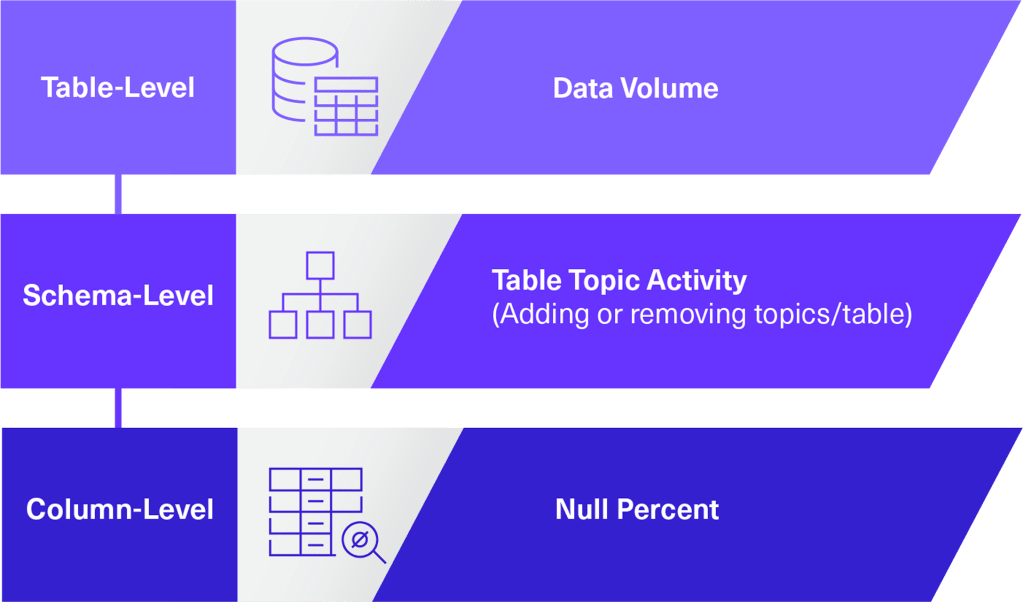

The Lightup connector for ksqlDB enables proactive Data Quality monitoring for metadata Auto Metrics for Tables, Schemas, and Columns in Kafka “Topics” (handled as Tables in Lightup).

Technical Requirements

- ksqlDB must be installed and configured for stream processing on Kafka clusters.

- A Kafka schema registry is necessary to get the schema of the values in each Topic.

Key Benefits

Maintain Optimal Performance

Monitor Streaming Data

Prevent Downstream Outages

Get Started with Lightup Data Quality for ksqlDB